|

Size: 7709

Comment: WIP

|

Size: 10265

Comment: WIP

|

| Deletions are marked like this. | Additions are marked like this. |

| Line 3: | Line 3: |

| We've been looking at some of Corosync's internals recently, spurred on by one of our new HA (highly-available) clusters spitting the dummy during testing. What we found isn't a "bug" per se (we're good at finding those), but a case where the correct behaviour isn't entirely clear. We thought the findings were worth sharing, and we hope you find them interesting even if you don't run any clusters yourself. | We've been looking at some of Corosync's internals recently, spurred on by one of our new HA (highly-available) clusters spitting the dummy during testing. What we found isn't a "bug" per se (we're good at finding those), but a case where the correct behaviour isn't entirely clear. We thought the findings were worth sharing, and we hope you find them interesting even if you don't run any clusters yourself. '''Disclaimer goes here: this is purely just what we've found so far; we have yet to formalise this and perform further testing so we can present proper questions to the Corosync developers''' |

| Line 21: | Line 25: |

| Linux HA is split into a number of parts that have changed significant over time. At its simplest, you can consider two major components: * A ''cluster engine'' that handles synchronisation and messaging; this is '''Corosync''' * A ''cluster resource manager'' (CRM) that uses the engine to manage services and ensure they're running where they should be; this is '''Pacemaker''' |

|

| Line 22: | Line 29: |

| ------------ | We're only interested in Corosync, specifically its communication layer. There's two major types of communication in Corosync, the shared cluster data and a "token" which is passed around the ring of cluster nodes (this is a conceptual ring, not a physical network ring). The token is used to manage connectivity and provide synchronisation guarantees necessary for the cluster to operate '''[1] footnote here'''. The token is always transferred by unicast UDP. Cluster data can be sent either by multicast UDP or unicast UDP - we use multicast. In either case, the source address is always a normal unicast address. Given this, a 4-node cluster looks something like this: {{attachment:corosync_communication.png}} One convenient feature in Corosync is its automatic selection of source address. This is done by comparing the `bindnetaddr` config directive against all IP addresses on the system and finding a suitable match. The cool thing about this is that you can use the exact same config file for all nodes in your cluster, and everything should Just Work™. Automatic source-address selection is always used for IPv4, it's not negotiable. It's never done for IPv6, addresses are used exactly as supplied to `bindnetaddr`. Interestingly, you only supply an address to `bindnetaddr`, such as 192.168.0.42 - CIDR notiation is not used, as might be commonly expected when referring to a subnet. Instead, Corosync compares each of the system's addresses (plus the associated netmask) against `bindnetaddr`, applying the same netmask. This diagram demonstrates a typical setup: '''a diagram goes here''' Caption: While somewhat contrived, we can see how the local address is determined as intended. = The problem as we see it = The key here is in two parts. Firstly, it's possible for a floating pacemaker-managed IP to match against your `bindnetaddr` specification. As an example: * 192.168.0.1/24 - static IP used for cluster traffic * 192.168.0.42/24 - a floating "service IP" used for an HA daemon Secondly, Corosync sometimes re-enumerates network addresses '''[2] footnote here''' on the host for automatic source address selection. We're not 100% sure of the circumstances under which this occurs, but a classic example would be while performing a rolling upgrade of the cluster software. A normal process would be to unmanage all your resources, stop Pacemaker and Corosync, upgrade, then bring them back up again and remanage your resources. Taken together, this can lead to the cluster getting very confused when one host's unicast address for cluster traffic suddenly changes. Consider a 2-node cluster comprising nodes A and B: 1. A's address changes 1. The token drops as a result of the ring breaking 1. B thinks A if offline, as A's new address isn't allowed through our outbound firewall rules, so B doesn't receive anything from A 1. A also thinks B is offline, because B can't hear A 1. In the meantime, A will "discover" a new cluster node: itself, operating on the new address! This state is passed on to Pacemaker, which attempts to juggle the resources to satisfy its policy. For resources that were already running on A, it now sees duplicate copies, which isn't allowed. Original-A asks for new-A to be STONITH'd. Meanwhile, B is just trying to get all the resources started again. This is decidedly not ideal. What really got us is that we hadn't seen this behaviour in any previously deployed cluster; something was different. Normally we'll dedicate a separate subnet to cluster traffic, keeping it away from "production traffic" like load-balanced MySQL or HTTP. This time we didn't, opting to reuse the subnet. We did this because we don't like "polluting" a network segment with multiple IP subnets. We could have setup another VLAN to confine the subnet (avoiding pollution), but it would've meant putting that into our switches just for two physical machines, which seemed like overkill. == Footnotes == 1. Cluster data is enqueued on each node when it's received. When a node receives the token, it processes the multicast data that has queued-up, does whatever it needs to, then passes the token to the next node. 2. The enumeration happens here, both for startup and "refreshing": https://github.com/corosync/corosync/blob/master/exec/totemip.c#L342 ------ |

| Line 30: | Line 90: |

| = How communication works = * Corosync can communicate using udp multicast or udp unicast. We use multicast * Data is sent into the cluster with a regular unicast address as the source, to a multicast group as the destination * Data sent in multicast packets is enqueued on each node when it's received * '''In addition,''' a token is also passed around the cluster in a ring. The token is passed by pure udp unicast, using the same unicast source address as previously mentioned * When a node receives the token, it processes the multicast data that has queued-up, modifies the token a bit to note that, then passes the token to the next node |

|

| Line 43: | Line 92: |

| = The problem as we see it = * Corosync takes the `bindnetaddr` config parameter * For IPv4 it tries to automatically find a match against configured IP addresses * This behaviour is not configurable * This is a feature - it means you can use the exact same config file on all nodes in the cluster * When using IPv6, no such automatic selection is made * Corosync enumerates the system's IPs and tries to find a match for the `bindnetaddr` specification * It does this by taking the netmask of the address, masking the spec, and seeing if IP+mask == spec+mask * It's possible for a floating pacemaker-managed IP to match/overlap your `bindnetaddr` IP, eg: * 192.168.0.1/24 - static IP used for cluster traffic * 192.168.0.42/24 - a floating "service IP" used for an HA service * If Corosync re-enumerates the IPs sometime after startup (could happen any time, as far as we're concerned), it can find the "new" IP (the floating IP) and select that as the new local address for cluster communications * The enumeration happens here: https://github.com/corosync/corosync/blob/master/exec/totemip.c#L342 * MC notes {{{ michael: a good case where corosync will re-enumerate your IPs is simply when you unmanage everything, bring pacemaker and corosync down, then bring them back up again michael: as happens when we're upgrading these pieces of software, for instance }}} * Suddenly pacemaker sees a third node in the cluster * Corosync also thinks that the cluster has been partitioned, as the old address (192.168.0.1 in our example) has suddenly disappeared * The fact that firewall rules will be dropping any traffic from the now-in-use floating IP will also cause trouble |

Tracing unexpected behaviour in Corosync's address selection

We've been looking at some of Corosync's internals recently, spurred on by one of our new HA (highly-available) clusters spitting the dummy during testing. What we found isn't a "bug" per se (we're good at finding those), but a case where the correct behaviour isn't entirely clear.

We thought the findings were worth sharing, and we hope you find them interesting even if you don't run any clusters yourself.

Disclaimer goes here: this is purely just what we've found so far; we have yet to formalise this and perform further testing so we can present proper questions to the Corosync developers

Contents

Observed behaviour

Before signing-off on cluster deployments we run everything through its paces to ensure that it's behaving as expected. This means plenty of failovers and other stress-testing to verify that the cluster handles adverse situations properly.

Our standard clusters comprise two nodes with Corosync+Pacemaker, running a "stack" of managed resources. HA MySQL is a common example is: DRBD, a mounted filesystem, the MySQL daemon and a floating IP address for MySQL.

During routine testing for a new customer we saw the cluster suddenly partition itself and go up in flames. One side was suddenly convinced there were three nodes in the cluster and called in vain for a STONITH response, while the other was convinced that its buddy had been nuked from orbit and attempted to snap up the resources. What was going on!?

It was time to start poring over the logs for evidence. To understand what happened you need to know how Corosync communicates between nodes in the cluster.

A crash-course in Corosync

Linux HA is split into a number of parts that have changed significant over time. At its simplest, you can consider two major components:

A cluster engine that handles synchronisation and messaging; this is Corosync

A cluster resource manager (CRM) that uses the engine to manage services and ensure they're running where they should be; this is Pacemaker

We're only interested in Corosync, specifically its communication layer.

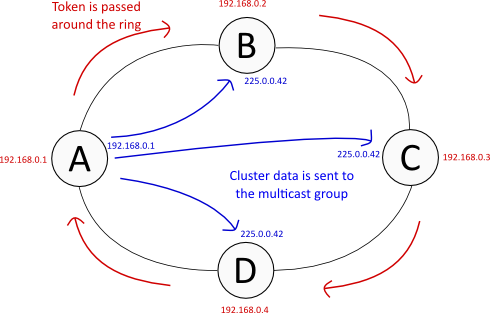

There's two major types of communication in Corosync, the shared cluster data and a "token" which is passed around the ring of cluster nodes (this is a conceptual ring, not a physical network ring). The token is used to manage connectivity and provide synchronisation guarantees necessary for the cluster to operate [1] footnote here.

The token is always transferred by unicast UDP. Cluster data can be sent either by multicast UDP or unicast UDP - we use multicast. In either case, the source address is always a normal unicast address.

Given this, a 4-node cluster looks something like this:

One convenient feature in Corosync is its automatic selection of source address. This is done by comparing the bindnetaddr config directive against all IP addresses on the system and finding a suitable match. The cool thing about this is that you can use the exact same config file for all nodes in your cluster, and everything should Just Work™.

Automatic source-address selection is always used for IPv4, it's not negotiable. It's never done for IPv6, addresses are used exactly as supplied to bindnetaddr.

Interestingly, you only supply an address to bindnetaddr, such as 192.168.0.42 - CIDR notiation is not used, as might be commonly expected when referring to a subnet. Instead, Corosync compares each of the system's addresses (plus the associated netmask) against bindnetaddr, applying the same netmask. This diagram demonstrates a typical setup:

a diagram goes here

Caption: While somewhat contrived, we can see how the local address is determined as intended.

The problem as we see it

The key here is in two parts. Firstly, it's possible for a floating pacemaker-managed IP to match against your bindnetaddr specification. As an example:

- 192.168.0.1/24 - static IP used for cluster traffic

- 192.168.0.42/24 - a floating "service IP" used for an HA daemon

Secondly, Corosync sometimes re-enumerates network addresses [2] footnote here on the host for automatic source address selection. We're not 100% sure of the circumstances under which this occurs, but a classic example would be while performing a rolling upgrade of the cluster software. A normal process would be to unmanage all your resources, stop Pacemaker and Corosync, upgrade, then bring them back up again and remanage your resources.

Taken together, this can lead to the cluster getting very confused when one host's unicast address for cluster traffic suddenly changes. Consider a 2-node cluster comprising nodes A and B:

- A's address changes

- The token drops as a result of the ring breaking

- B thinks A if offline, as A's new address isn't allowed through our outbound firewall rules, so B doesn't receive anything from A

- A also thinks B is offline, because B can't hear A

- In the meantime, A will "discover" a new cluster node: itself, operating on the new address!

This state is passed on to Pacemaker, which attempts to juggle the resources to satisfy its policy. For resources that were already running on A, it now sees duplicate copies, which isn't allowed. Original-A asks for new-A to be STONITH'd. Meanwhile, B is just trying to get all the resources started again.

This is decidedly not ideal. What really got us is that we hadn't seen this behaviour in any previously deployed cluster; something was different.

Normally we'll dedicate a separate subnet to cluster traffic, keeping it away from "production traffic" like load-balanced MySQL or HTTP. This time we didn't, opting to reuse the subnet. We did this because we don't like "polluting" a network segment with multiple IP subnets. We could have setup another VLAN to confine the subnet (avoiding pollution), but it would've meant putting that into our switches just for two physical machines, which seemed like overkill.

Footnotes

1. Cluster data is enqueued on each node when it's received. When a node receives the token, it processes the multicast data that has queued-up, does whatever it needs to, then passes the token to the next node.

2. The enumeration happens here, both for startup and "refreshing": https://github.com/corosync/corosync/blob/master/exec/totemip.c#L342

Stuff above this line is "refined" article material

Stuff below this line is bullet-point notes that I got MC to help verify

Why Corosync can select a different address

totemip_getifaddrs() gets all the addresses from the kernel and puts them in a linked list, you can think of them as tuples of (name,IP)

- It does so my prepending to the head of the list

- As a result, "later" addresses appear at the head of the list

- When Corosync goes to traverse the list, it hits them in the reverse order of what a human would tend to expect

NB: the listing from the kernel is probably in undefined (ie. arbitrary) order?

- Corosync uses the first match it finds

Example of possible linked list

NAME eth1 eth0:mysql eth0:nfs eth0 ADDRESS 10.1.1.1 -> 192.168.0.42 -> 192.168.0.7 -> 192.168.0.1 (backups) (HA floating) (static) (static, should be used for cluster traffic)

How the hack-patch avoids this

This is the hack fix: http://packages.engineroom.anchor.net.au/temp/corosync-2.0.0-ignore-ip-aliases.patch

- It's a huge hack

Here it is »inlined

1 diff -ruN corosync-2.0.0.orig/exec/totemip.c corosync-2.0.0/exec/totemip.c 2 --- corosync-2.0.0.orig/exec/totemip.c 2012-04-10 21:09:12.000000000 +1000 3 +++ corosync-2.0.0/exec/totemip.c 2012-05-09 15:03:51.272429481 +1000 4 @@ -358,6 +358,9 @@ 5 (ifa->ifa_netmask->sa_family != AF_INET && ifa->ifa_netmask->sa_family != AF_INET6)) 6 continue ; 7 8 + if (ifa->ifa_name && strchr(ifa->ifa_name, ':')) 9 + continue ; 10 + 11 if_addr = malloc(sizeof(struct totem_ip_if_address)); 12 if (if_addr == NULL) { 13 goto error_free_ifaddrs; 14 @@ -384,7 +387,7 @@ 15 goto error_free_addr_name; 16 } 17 18 - list_add(&if_addr->list, addrs); 19 + list_add_tail(&if_addr->list, addrs); 20 } 21 22 freeifaddrs(ifap); 23 @@ -449,6 +452,9 @@ 24 if (lifreq[i].lifr_addr.ss_family != AF_INET && lifreq[i].lifr_addr.ss_family != AF_INET6) 25 continue ; 26 27 + if (lifreq[i].lifr_name && strchr(lifreq[i].lifr_name, ':')) 28 + continue ; 29 + 30 if_addr = malloc(sizeof(struct totem_ip_if_address)); 31 if (if_addr == NULL) { 32 goto error_free_ifaddrs; 33 @@ -484,7 +490,7 @@ 34 if_addr->interface_num = lifreq[i].lifr_index; 35 } 36 37 - list_add(&if_addr->list, addrs); 38 + list_add_tail(&if_addr->list, addrs); 39 } 40 41 free (lifconf.lifc_buf);

Skip the IP if the name has a colon in it

Append to the tail of the list, hopefully matching an "expected" ordering

Why it's necessary

Netlink is used to interrogate the kernel for addresses

- The interface/protocol used is old, and doesn't know about primary/secondary/other addresses

This basically means there's no way to specify additional criteria for address selection, or to dodge addresses from selection

- In theory Corosync could be patched to use a newer interface/protocol that can retrieve this information from the kernel

Other ways to dodge this

- Use IPv6

All previous clusters use a separate subnet and NIC for cluster traffic, so this doesn't happen

- It's happened this time because cluster traffic is in the same subnet as internal service addresses

- We didn't see a point in using a separate subnet in this case

- Because we don't put two subnets on the same network segment, so we wouldn't had to configure another NIC on each machine, which means another VLAN between the two - it seemed like overkill